|

RCAN: This paper’s authors propose deep residual channel attention networks (RCAN). The role of blocks is to avoid wasting time with low-frequency info and use this knowledge in the next block. That information processing method allows for better performance, even when the number of blocks increases. The general idea behind all algorithms is an extensive neural network with the objective of learning high-frequency information and several blocks of neural networks that learn low-frequency information. The idea behind super-resolution algorithms These features, allowed us to test the algorithms in our images and draw some conclusions. It was chosen because it allows us to see the results obtained with the author’s conditions plus, it provides a git repository with code (such as a Notebook) ready for reproducing the experiments in our environment (for example, Google Colab). The source for the algorithms is the Paperwithcode. Structures and description of SR algorithms Choosing the algorithms This method is unstable in cases where the variance or luminescence of the reference image is low therefore, in medical imaging, for example, this metric could have inconsistent results. Structural similarity index (SSIM): This metric compares the reference image’s contrast, luminescence, and structural details with the reference image.

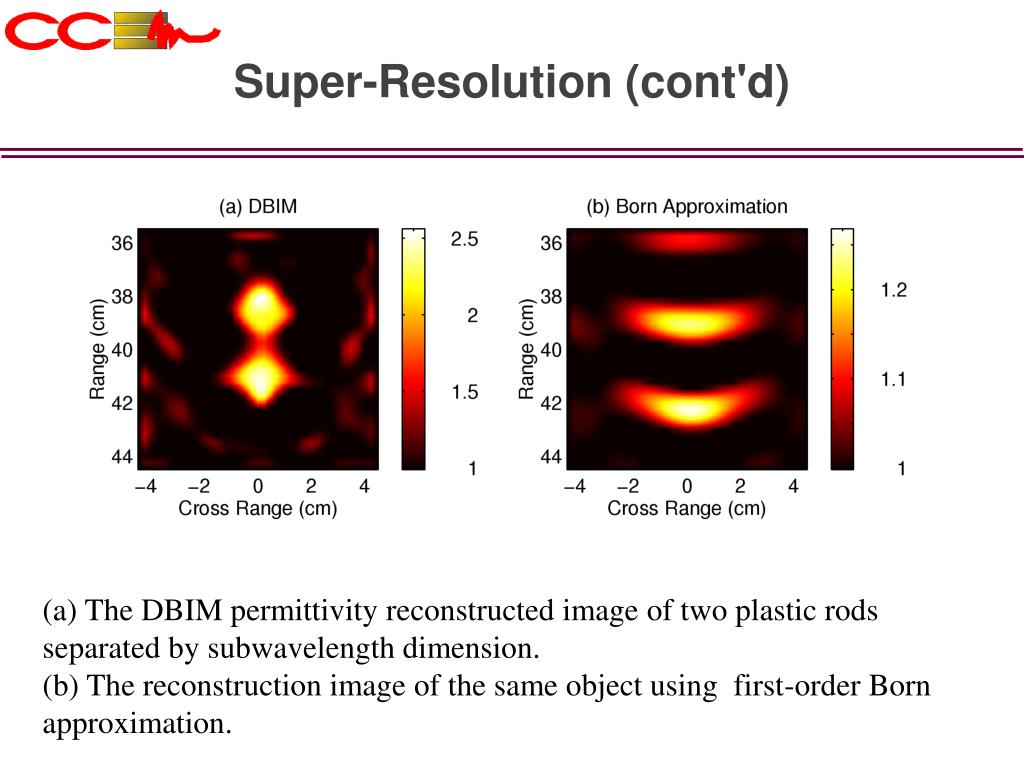

A disadvantage of this method is that it doesn’t consider the structural information within the image. Peak signal-to-noise ratio (PSNR): It’s a qualitative measure of image quality compression, defined by the maximum pixel value and the mean square error between the reference image and the SR image, also known as the power of image distortion noise. Plus, these metrics were all in the academic paper we based our research on. Although there are several metrics, we selected those with no implicit human subjectivity. The metrics used for measuring image quality are vital. The transformer concept is also widely applied to NLP problems, such as RCAN, ESRGAN, SRFBN, RDN, and SwinIR. Reinforcement Learning algorithms have been used recently. The algorithms are based on Convolutional Neural Network (CNN) and Generative Adversarial Network (GAN). The Deep Learning (DL) approach: the most common method.The classic approach: uses statistical methods, prediction-based methods, and sparse representation methods to achieve super-resolution.There are two approaches to solve the super-resolution problem: Another idea behind SR is to find patterns and tendencies from the HR images and reconstruct the image in LR, something like an inverse solution. Super-resolution aims to extract a High-Resolution (HR) image from its respective Low-Resolution (LR) one. What is Super-Resolution and how does it work? This blog aims to compare different state-of-the-art algorithms for SR and their application in the industry, stating if the academic work is actually reproducible for practical use. Practical applications of SR problems, need to make sense of academic data, pipelines and features transformation. However, the industry can’t wait that much time due to the constant change in its landscape.

Because academic work involves a lot of validation and later publishing of the algorithms, it can take several years. The difference between them is time frames. There are two different approaches: the academic and the “practical” approach.

One fascinating problem to solve within the computer vision field is super-resolution (SR).

Computer vision is a popular application of AI through cellphones, video conference platforms, and video vigilance cameras, among other use cases. Nowadays, everyone is exposed to artificial intelligence (AI) in one way or another.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed